df = pd.read_csv("data/canada_usa_cities.csv")

X = df.drop(columns=["country"])

y = df["country"]

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=123)The fundamental tradeoff and the golden rule

Reminder:

score_train: is our training score (or mean train score from cross-validation).

score_valid is our validation score (or mean validation score from cross-validation).

score_test is our test score.

The “fundamental tradeoff” of supervised learning

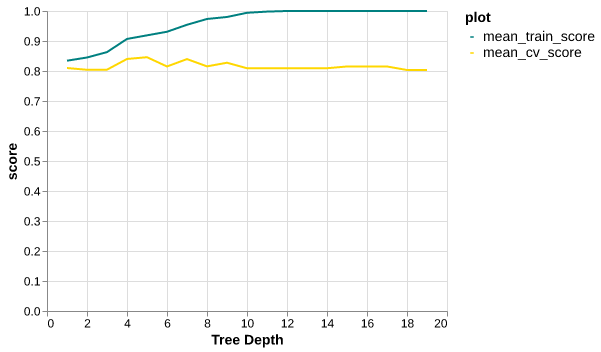

As model complexity ↑, Score_train ↑ and Score_train − Score_valid tend to ↑.

How to pick a model that would generalize better?

from sklearn.tree import DecisionTreeClassifier

from sklearn.model_selection import cross_validate

results_dict = {"depth": list(), "mean_train_score": list(), "mean_cv_score": list()}

for depth in range(1,20):

model = DecisionTreeClassifier(max_depth=depth)

scores = cross_validate(model, X_train, y_train, cv=10, return_train_score=True)

results_dict["depth"].append(depth)

results_dict["mean_cv_score"].append(scores["test_score"].mean())

results_dict["mean_train_score"].append(scores["train_score"].mean())

results_df = pd.DataFrame(results_dict)

depth 5.000000

mean_train_score 0.918848

mean_cv_score 0.845956

Name: 4, dtype: float64best_depth = int(results_df.sort_values('mean_cv_score', ascending=False).iloc[0]['depth'])

best_depth5The Golden Rule

Even though we care the most about test score:

THE TEST DATA CANNOT INFLUENCE THE TRAINING PHASE IN ANY WAY

Golden rule violation: Example 1

Golden rule violation: Example 2

How can we avoid violating the golden rule?

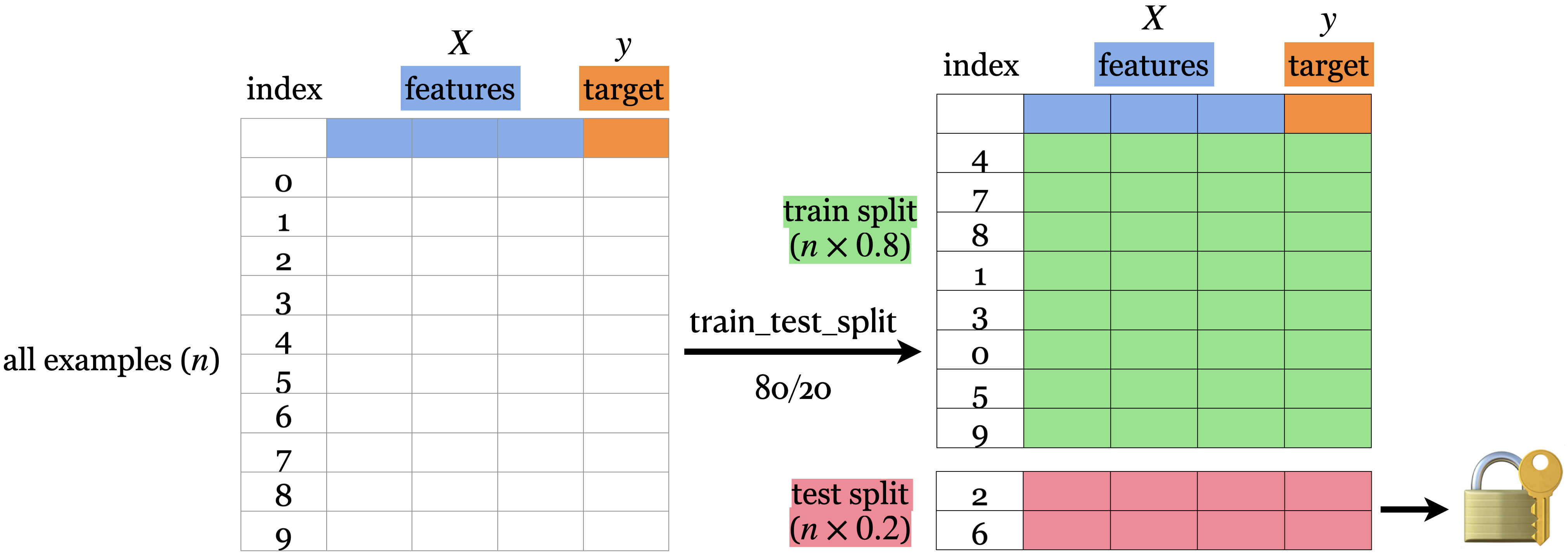

Here is the workflow we’ll generally follow.

- Splitting: Before doing anything, split the data

XandyintoX_train,X_test,y_train,y_testortrain_dfandtest_dfusingtrain_test_split.

- Select the best model using cross-validation: Use

cross_validatewithreturn_train_score = Trueso that we can get access to training scores in each fold. (If we want to plot train vs validation error plots, for instance.) - Scoring on test data: Finally score on the test data with the chosen hyperparameters to examine the generalization performance.